Software Development Expertise

TL;DR: Being an expert in software development requires a certain set of skills, knowledge, and experience. We present a first conceptual theory of software development expertise that is grounded in data from a mixed-methods survey with 335 software developers and in literature on expertise and expert performance. The theory describes central properties of software development expertise and which factors foster or hinder its formation, including how developers’ performance may decline over time. Moreover, our quantitative results show that developers’ expertise self-assessments are context-dependent and that experience is not necessarily related to expertise.

Article on dev.to

Motivation

An expert is, according to Merriam-Webster, someone “having, involving, or displaying special skill or knowledge derived from training or experience”. For some areas, such as playing chess, there exist representative tasks and objective criteria for identifying experts. In software development, however, it is more difficult to find objective measures for quantifying expert performance. One reason is that software development itself includes diverse tasks such as implementing new features, analyzing requirements, and fixing bugs. In the past, researchers investigated certain aspects of software development expertise such as the influence of programming experience, desired attributes of software engineers, or the time it takes for developers to become “fluent” in software projects. However, there is currently no theory combining those individual aspects. Such a theory could help structuring existing knowledge about software development expertise in a concise and precise way and hence facilitate its communication. With our paper, we contribute a first theory that describes central properties of software development expertise and important factors influencing its formation. The theory focuses on individual software developers working on different software development tasks, having the long-term goal of becoming experts in those tasks. It is grounded in data from a mixed-methods survey with 335 participants and in literature on expertise and expert performance. Our expertise model is task-specific and currently focuses on programming-related tasks, but includes the notion of transferable knowledge and experience from related fields or tasks. Our process theory is a first step towards the long-term goal to build a variance theory to be able explain and predict why and when a software developer reaches a certain level of expertise.

Conceptual Theory

In this blog post, we skip the details of our research design and focus on the resulting conceptual theory, summarzing implications for researchers, developers, and employers.

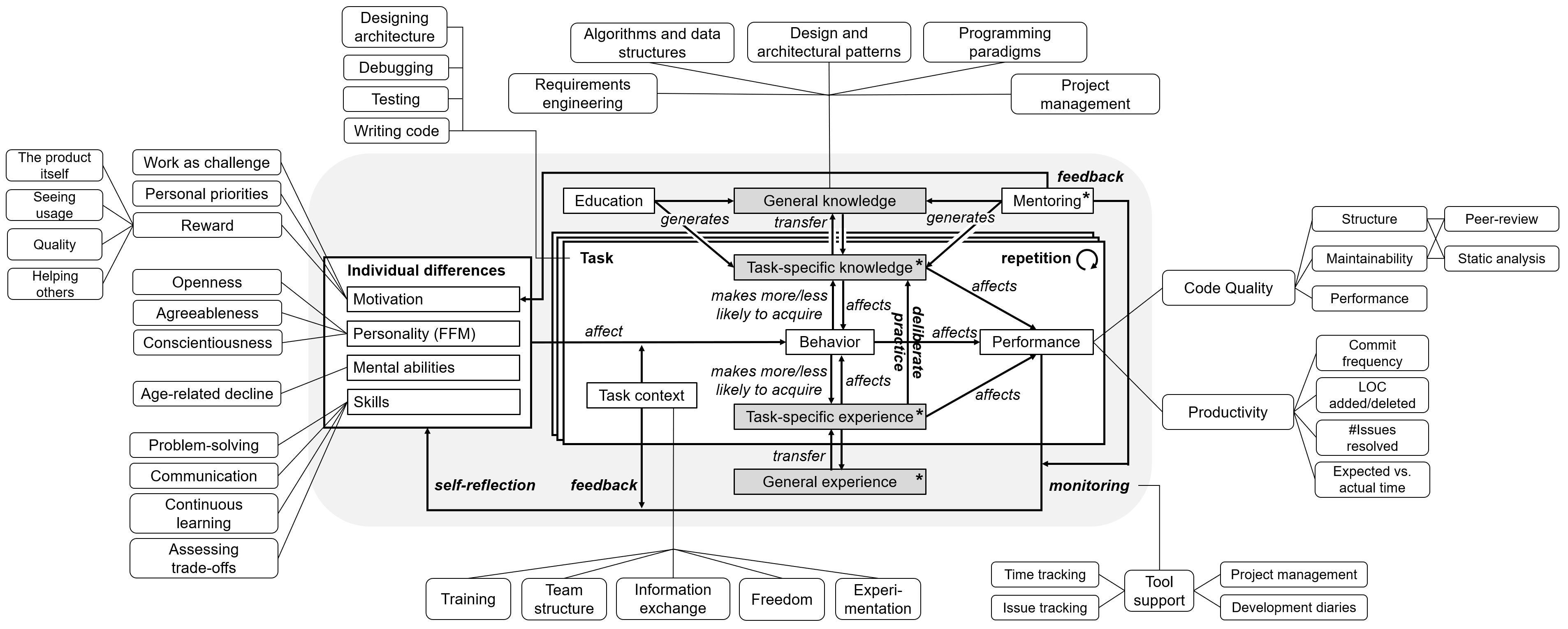

The above figure visualizes the high-level concepts of our conceptual theory together with their relationships. Some relationships are intentionally labeled with rather generic terms such as “affects” or “generates”, because more research is needed to investigate them. The theory describes the process of software development expertise formation, that is the path of an individual software development novice towards becoming an expert. This path consists of gradual improvements with many corrections and repetitions, therefore we do not describe discrete steps like, for example, the 5-stage Dreyfus model of skill acquisition (see Section “Experience vs. Expertise”). Instead, we focus on the repetition of individual tasks. In the following, we will describe the core of our theory (gray background in the figure). In particular, we will elaborate on the role of monitoring, feedback, and self-reflection, and connect the corresponding feedback cycle with the concept of deliberate practice. All concepts and relationships of our theory are described in more detail in the paper.

Knowledge and Experience

Knowledge can be defined as a “permanent structure of information stored in memory”. Some researchers consider a developer’s knowledge base as the most important aspect affecting their performance. Studies with software developers suggest that “the knowledge base of experts is highly language dependent”, but experts also have “abstract, transferable knowledge and skills”. We modeled this aspect in our theory by dividing the central concepts knowledge and experience, which we derived from a first iteration of our online survey and from related work, into a task-specific and a general part. This is a simplification of our model, because the relevance of knowledge and experience is rather a continuum than dichotomous states. However, Shneiderman and Mayer, who developed a behavioral model of software development, used a similar differentiation between general (“semantic”) and specific (“syntactical”) knowledge. General knowledge and experience does not only refer to technical aspects (e.g., low-level computer architecture) or general concepts (e.g., design patterns), but also to knowledge about and experience with successful strategies.

Many participants referred to the importance of the quantity of software development experience (e.g., in terms of years), but some also described its quality. Examples for the latter include having built “everything from small projects to enterprise projects” or “[experience] with many codebases.” In particular, participants considered professional experience, for example having “shipped a significant amount of code to production or to a customer”, and working on shared code to be important factors. Examples for general knowledge include “paradigms […], data structures, algorithms, computational complexity, and design patterns”—task- or language-specific expertise includes having an “intimate knowledge of the design and philosophy of the language’’ and knowing “the shortcomings of the language […] or the implementation […].”

Tasks

Since our model of software development expertise is task-specific, we asked our participants to name the three most important tasks that a software development expert should be good at. The three most frequently mentioned tasks were designing software architecture, writing source code, and analyzing and understanding requirements. Many participants not only mentioned the tasks, but also certain quality attributes associated with them, for example

“architecting the software in a way that allows flexibility in project requirements and future applications of the components”

and “writing clean, correct, and understandable code”. Other mentioned tasks include testing, communicating, staying up-to-date, and debugging. Our theory currently focuses on tasks directly related to programming, but our participants’ responses show that it is important to broaden this view in the future to include, for example, tasks related to requirements engineering (analyzing and understanding requirements) or the adaption of new technologies (staying up-to-date).

Motivation

Related work describes certain individual differences that affect if and how people come closer to their goal of being an expert in a certain domain. The mental abilities and personality are quite stable, but especially the motivation can change over time and influence the way people approach software development. Many of the participants in our study were intrinsically motivated, stating that problem solving is their main motivation. One participant wrote that solving problems “makes [him] feel clever, and powerful.” Another participant compared problem solving to climbing a mountain:

“I would equate that feeling [of getting a feature to work correctly after hours and hours of effort] to the feeling a mountain climber gets once they reach the summit of Everest.”

Many developers enjoy seeing the result of their work. They are particularly satisfied to see a solution which they consider to be of high quality. One participant wrote that (s)he gets “a feeling of satisfaction from creating an elegant piece of code.” Moreover, some participants mentioned refactoring as a rewarding task:

“The initial design is fun, but what really is more rewarding is refactoring.”

Others stressed the importance of creating something new or helping others. Interestingly, money was only mentioned by few participants as a motivation for their work.

Performance

At this point, our goal is not to treat performance as a dependent variable that we try to explain for individual tasks, we rather consider different performance monitoring approaches to be a means for feedback and self-reflection (see below). For our long-term goal to build a variance theory for explaining and predicting the development of expertise, it will be more important to be able to accurately measure developers’ performance. However, participants’ mentioned different properties that source code of experts should possess: It should be well-structured and readable, contain “comments when necessary”, be “optimized” in terms of “performance” and sustainable in terms of maintainability. One participant summarized the code that experts write as follows:

“Everyone can write […] code which a machine can read and process but the key lies in writing concise and understandable code which […] people who have never used that piece of code before [can read].”

To identify factors hindering expertise development, we asked participants whether they ever observed a significant decline of their own programming performance or the performance of co-workers over time. In our study, 41.5% of the participants who answered that question actually observed a performance decline over time. We asked those participants to describe how the decline manifested itself and to suggest possible reasons. The main reasons for performance decline were related demotivation, changes in the work environment, age-related decline, changes in attitude, and shifting towards other tasks. Related to problem solving being one of the main motivations (see above), the most common reason for demotivation was non-challenging work, often caused by tasks becoming routine over time. One participant described this effect as follows:

“I perceived an increasing procrastination in me and in my colleagues, by working on the same tasks over a relatively long time (let’s say, 6 months or more) without innovation and environment changes.”

Other reasons included not seeing a clear vision or direction in which the project is or should be going and missing reward for high-quality work, which was described above as one of the main factors motivating developers. Regarding the work environment, participants named stress due to tight deadlines or economic pressure (“the company’s economic condition deteriorated’’). Moreover, bad management or team structure were named, for example:

“[h]aving a supervisor/architect who is very poor at communicating his design goals and ideas, and refuses to accept that this is the case, even when forcibly reminded.’’.

Changes in attitude may happen due to personal issues (e.g., getting divorced) or due to shifting priorities (e.g., friends and family getting more important). When developers are being promoted to team leader or manager, they “shift towards other tasks”, resulting in a declining programming performance.

Participants also described age-related performance decline. We consider this phenomenon, and the resulting consequences for individual developers and the organization, to be an important area for future research. To illustrate the effects that age-related decline may have, we provide two verbatim quotes by experienced developers:

“For myself, it’s mostly the effects of aging on the brain. At age 66, I can’t hold as much information short-term memory, for example. In general, I am more forgetful. I can compensate for a lot of that by writing simpler functions with clean interfaces. The results are still good, but my productivity is much slower than when I was younger.” (software architect, age 66)

“Programming ability is based on desire to achieve. In the early years, it is a sort of competition. As you age, you begin to realize that outdoing your peers isn’t all that rewarding. […] I found that I lost a significant amount of my focus as I became 40, and started using drugs such as ritalin to enhance my abilities. This is pretty common among older programmers.’’ (software developer, age 60)

Task Context

The task or work context can either foster or hinder developers on their way to becoming an expert in certain software development tasks. To investigate its influence on expertise development, we asked what employers should do in order to facilitate a continuous development of their employees’ software development skills. We grouped the responses into four main categories: encourage learning, encourage experimentation, improve information exchange, and grant freedom. To encourage learning, employers may offer in-house or pay for external training courses, pay employees to visit conferences, provide a good analog and/or digital library, and offer monetary incentives for self-improvement. The most frequently named means to encourage experimentation were motivating employees to pursue side projects and building a work environment that is open for new ideas and technologies. To improve information exchange between development teams, between different departments, or even between different companies, participants proposed to facilitate meetings such as agile retrospectives, “Self-improvement Fridays”, “lunch-and-learn sessions”, or “Technical Thursday” meetings. Such meetings could explicitly target information exchange or skill development. Beside dedicated meetings, the idea of developers rotating between teams, projects, departments, or even companies is considered to foster expertise development. To improve the information flow between developers, practices such as mentoring or code reviews were mentioned. Finally, granting freedom, primarily in form of less time-pressure, would allow developers to invest in learning new technologies or skills.

Deliberate Practice

Having more experience with a task does not automatically lead to better performance. Research has shown that once an acceptable level of performance has been attained, additional “common” experience has only a negligible effect, in many domains the performance even decreases over time. The length of experience has been found to be only a weak correlate of job performance after the first two years—what matters is the quality of the experience. According to Ericsson et al., expert performance can be explained with “prolonged efforts to improve performance while negotiating motivational and external constraints”. For them, deliberate practice, meaning activities and experiences that are targeted at improving the own performance, are needed to become an expert. Traditionally, research on deliberate practice concentrated on acquired knowledge and experience to explain expert performance. However, later studies have shown that deliberate practice is necessary, but not sufficient, to achieve high levels of expert performance—individual differences play an important role.

A central aspect of deliberate practice is monitoring one’s own performance, and getting feedback, for example from a teacher or coach. Generally, such feedback helps individuals to monitor their progress towards goal achievement. Moreover, as Tourish and Hargie note:

“[T]he more channels of accurate and helpful feedback we have access to, the better we are likely to perform.”

In areas like chess or physics, studies have shown that experts have more accurate self-monitoring skills than novices. In our model, the feedback relation is connected to the concept task context as we assumed that feedback for a software developer most likely comes from co-workers or supervisors. Moreover, mentoring provides feedback and was identified in our study as an important source for motivation. To close the cycle, also (self-) monitoring and reflection influence developers motivation and consequently their behavior.

Monitoring and Self-reflection

In our survey, we asked participants whether they regularly monitor their software development activities. 38.7% of the 204 participants who answered that question said that they regularly monitor their software development activity. The most important monitoring activity was peer review, where participants mentioned asking co-workers for feedback, doing code-review, or doing pair-programming. One participant mentioned that he tries to “take note of how often [he] win[s] technical arguments with [his] peers.” Participants also mentioned time tracking tools like WakaTime or RescueTime, issue tracking systems like Jira or GitHub issues, and project management tools like Redmine and Scrum story points as sources for feedback, comparing expected to actual results (e.g., time goals or number of features to implement). Moreover, some developers mentioned writing a development diary. Participants reported using simple metrics such as the commit frequency, lines of code added/deleted, or the number of issues resolved. Further, they reported to use static analysis tools such as SonarQube, FindBugs, and Checkstyle, or to use GitHub’s activity overview. Some participants were doubtful regarding the usefulness of metrics, one participant noted:

“I do not think that measuring commits [or] LOC […] automatically is a good idea to rate performance. It will raise competition, yes—but not the one an employer would like. It will just get people to optimize whatever is measured.’’

The described phenomenon is also known as Goodhart’s law.

Experience vs. Expertise

Since software developers’ expertise is difficult to measure, researchers often rely on proxies for this abstract concept. We investigated the relationship and validity of the two proxies length of experience and self-assessed expertise to provide guidance for researchers. We found that neither developers’ experience measured in years nor the self-assessed programming expertise ratings yielded consistent results across all analyzed settings. We were also interested in the validity of self-assessed expertise, which is, like other self-reports, context-dependent. The validity of self-assessed expertise is related to the concept of self-reflection, but has also methodological implications for software engineering research in general, because self-assessed programming expertise is often used in studies with software developers to differentiate between novices and experts. To analyze the influence of question context on expertise self-assessments, we asked participants for two self-assessments of their Java expertise: One using a semantic differential scale from novice to expert (without further context) and one based on the Dreyfus model of skill acquisition (with context for each stage). We found that only the experienced developers tended to adjust their self-assessments to a higher rating after we provided context in form of the Dreyfus model. A possible interpretation of this result could be found in the Dunning-Kruger effect: Kruger and Dunning found that participants with a high skill-level underestimate their ability and performance relative to their peers. This may have happened in our sample with experienced developers when they assessed their Java expertise using the semantic differential scale. When we provided context in form of the Dreyfus model, they adjusted their ratings to a more adequate rating, whereas the less experienced developers stuck to their, possibly overestimated, ratings.

Conclusion

In this blog post, we presented core concepts of our conceptual theory of software development expertise, which is grounded in data from an online survey with 355 software developers and in existing literature on expertise and expert performance. We described different properties of software development expertise and factors fostering or hindering its development. In particular, we described developers’ motivation and performance decline and how the task context and deliberate practice can foster expertise development.

Researchers can use our methodological findings about (self-assessed) expertise and experience when designing studies involving self-assessments. If researchers have a clear understanding what distinguishes novices and experts in their study setting, they should provide this context when asking for self-assessed expertise and later report it together with their results. In our theory, we did not describe expertise development in discrete steps, but a direction for future work could be to at least develop a standardized description of novice and expert for certain tasks, which could then be used in semantic differential scales. To design concrete experiments measuring certain aspects of software development expertise, one needs to operationalize our conceptual theory. In our paper, we already linked concepts to existing measurement instruments such as UMS (motivation), WAIS-IV (mental abilities), or IPIP (personality) and also mentioned static analysis tools to measure code quality and basic productivity measures. This enables researchers to design experiments, but also to re-evaluate results from previous experiments. There are, for example, no coherent results about the connection of individual differences and programming performance yet.

Software developers can use our results to see which properties are distinctive for experts in their field, and which behaviors may lead to becoming a better software developer. For example, the concept of deliberate practice, and in particular having challenging goals, a supportive work environment, and getting feedback from peers are important factors. Beside peer-feedback, developers can use different code quality and productivity measures and tools to monitor their performance and reflect about their progress in becoming better software developers. As outlined above, phenomena like the Dunning-Kruger effect may affect developers’ ability to self-reflect. However, by establishing a combination of metrics, tools, peer-review, and mentoring, developers can try to mitigate such biases. The task context is a factor that can heavily influence the ability of developers to improve their software development skills, but is often out of their control. Once developers are in a team lead or management role, they can try to establish some of our participants’ suggestions to build a supportive work environment (see below).

Employers can use our results to learn what typical reasons for demotivation among their employees are, and how they can build a work environment supporting the self-improvement of their staff. They should be aware that problem solving is one of the main motivations for software developers, leading to the main factor for demotivation, which is non-challenging work. To avoid tasks becoming routine over time and thus being perceived as non-challenging, employers should make sure to have a good mix of continuity and change in their software development process. They should also communicate clear visions, directions, and goals and reward high-quality work wherever possible. Beside obvious strategies for expertise development such as offering training sessions or paying for conference visits, our results suggest that employers should think carefully about how information is shared between their developers and also between the development team and other departments of the company. Facilitating meetings that explicitly target information exchange and learning new skills should be a priority of every company that cares about the development of their employees.

More Information

A more detailed description of our research design and results can be found in our research paper. If you have any further questions, do not hesitate to contact me.